Overview of AI Security Courses

Courses and certifications in the field of AI Security & AI Red Teaming.

I've spent some time taking AI Security courses and certifications. Here's my overview of what's currently available, with their details and key differences.

Adversarial Machine Learning (Nvidia)

Level: Intermediate

Cost: $100 (~2 weeks)

TLDR: Deep & practical course on AI/LLM Security.

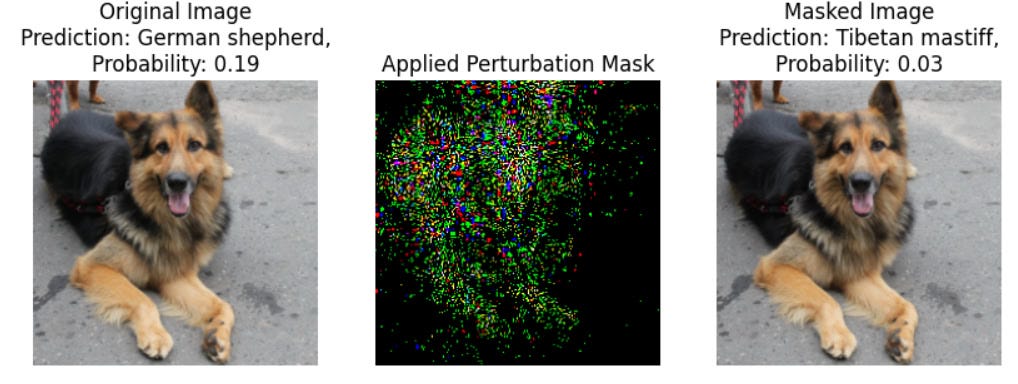

The course consists of ~10+ interactive notebooks covering key AI Security topics like evasion attacks, model extraction, data poisoning, and attacks on LLMs / VLLMs.

The notebooks include videos, theory, examples of use, and lab exercises for each topic, hosted in a virtual environment. This is the best course I have found so far.

Lakera 101 AI Security

Level: Beginner

Cost: Free (10 days)

TLDR: LLM Security Intro

A 10-day email course focused mainly on LLMs. Each day covers one topic, such as prompt injections, jailbreaks, and application-level security. It's a good introduction to LLM Security with additional links to deeper materials if you want to learn more. You receive a certificate after 10 days of emails.

Red Teaming LLM Applications (Giskard AI)

Level: Beginner

Cost: Free (~2-3 hours)

TLDR: LLM Safety Intro

Covers AI Red Teaming basics with focus on safety. The course teaches fundamental safety concepts like AI Red Teaming, jailbreaks, biases, and includes simple practical notebooks.

Certified AI/ML Pentester (C-AI/MLPen)

Level: Pre-intermediate

Cost: £250 (6 hours)

TLDR: Gandalf AI-like Exam

It is an certification with exam in the style of the Gandalf game, after passing which you get a certificate. Despite the description, it only covers LLMs and specifically prompt injections.

I don't really recommend it, as you can play Gandalf AI for free on the Lakera website.

Portswigger: Web LLM attacks

Level: Pre-intermediate

Cost: Free (~2 hours)

TLDR: Security of LLM Applications

PortSwigger is a web security academy that recently introduced a section dedicated to LLM security. It covers basic theory and labs on the application-level security of LLMs. The labs focus on topics such as prompt injections and vulnerabilities in function calling and APIs.

Note: As someone who usually enjoys solving PortSwigger labs, I found this module quite unrealistic. My impression is that this chapter was added just for the trendy topic without much effort, so I don't really recommend it for learning.

AI Safety Fundamentals: Alignment (Session 5)

Level: Pre-intermediate

Cost: Free (~4 hours per section)

TLDR: Adversarial attacks & Unlearning resources

This course covers AI Safety in general, but includes a module on AI Red Teaming.

The session consists of materials on adversarial attacks and LLM unlearning, as well as some relevant tasks (it can be completed independently or in a group if you are enrolled in the course).

Conclusion

It seems that Nvidia's Adversarial Machine Learning course is the best option for a deep dive into the field, while Lakera 101 AI Security for a quicker introduction with more focus on LLMs.

Additionally, you can explore the AI Safety Fundamentals, which has a stronger focus on AI Safety and includes lots of links to additional resources.

That's all I've found so far. Feel free to share any additional courses or certifications you're familiar with.